Classification, Segmentation, and Geometric Analysis of 3D Point Clouds using Deep Learning

Supervisors: Prof. Anath Fischer, Prof. Michael Lindenbaum

|

|

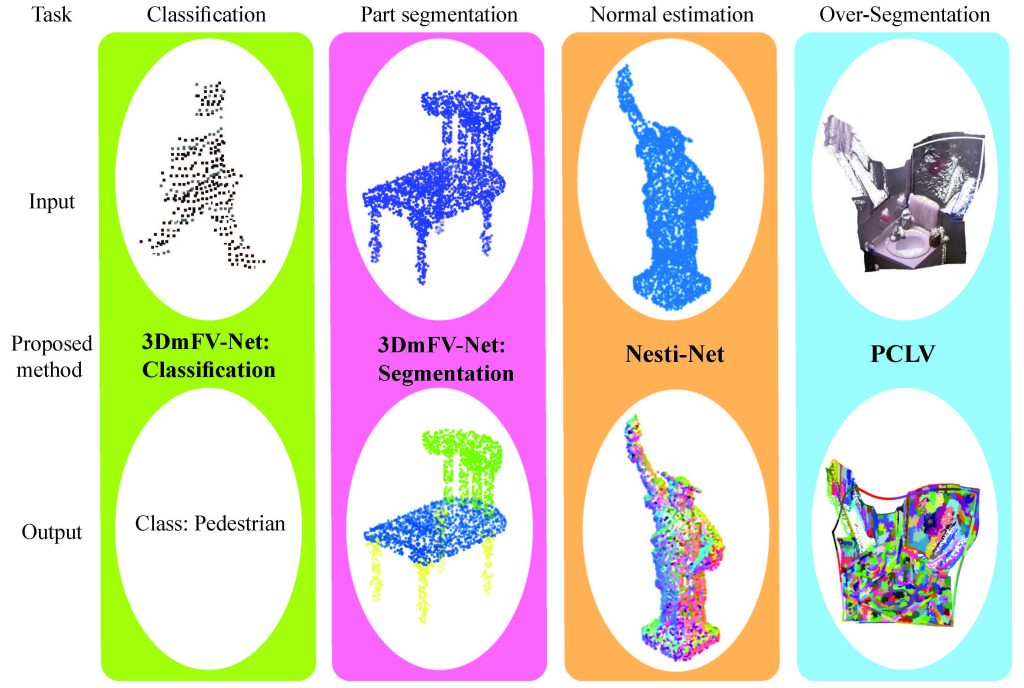

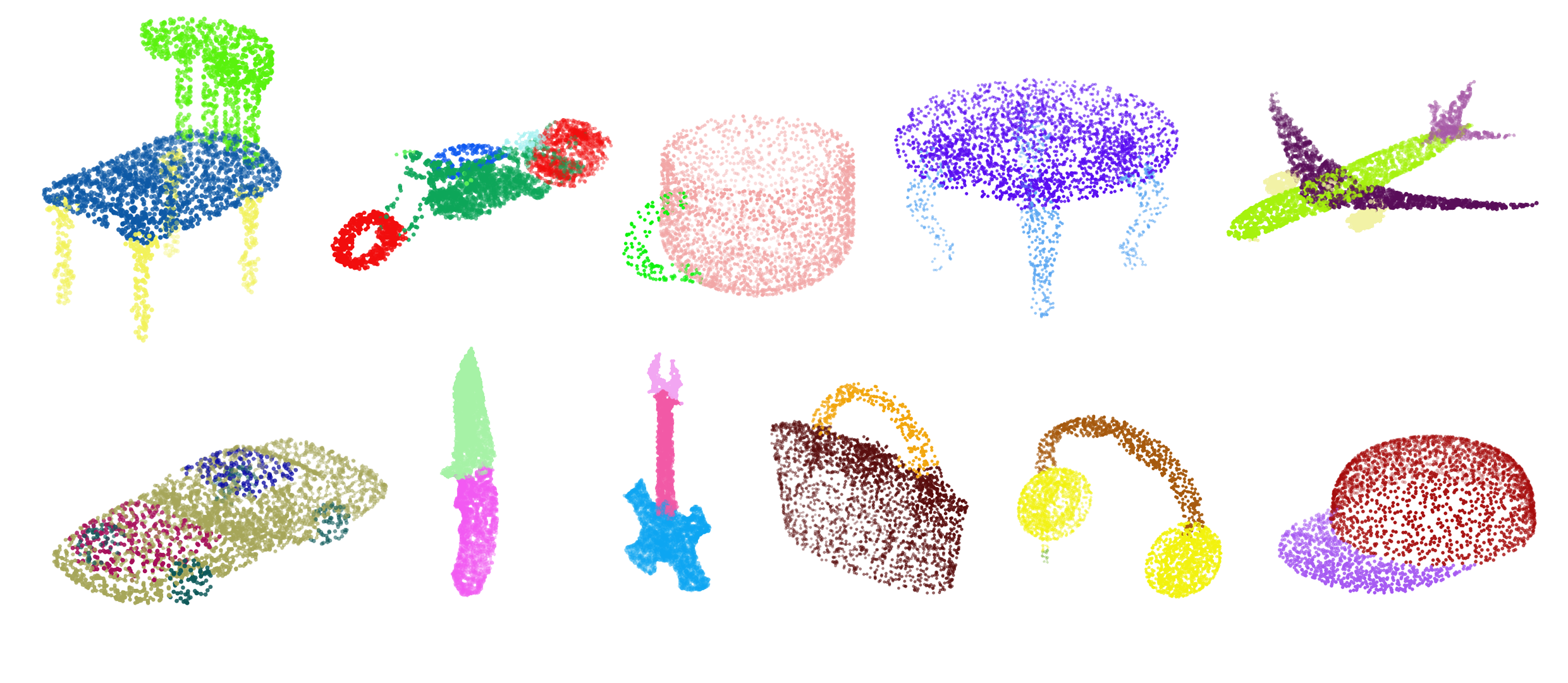

Modern robotic and vision systems are often equipped with a direct 3D data acquisition device, e.g. a LiDAR or RGBD camera, which provides a rich 3D point cloud representation of the surroundings. Point clouds have been used successfully for localization and mapping tasks, but their use in machine perception has not been fully explored. Recent advances in deep learning methods for images along with the growing availability of 3D point cloud data have fostered the development of new 3D deep learning methods that use point clouds for machine perception and semantic understanding. However, there are still many challenges associated with the point cloud’s unstructured and unordered nature which make them an unnatural input to deep learning methods. In this research we propose solutions to four machine perception, semantic understanding, and geometric processing tasks: point cloud classification, segmentation, normal estimation and over-segmentation. We propose a new global representation for point clouds called 3D Modified Fisher Vector (3DmFV). The representation is structured and independent of order and sample size. It enables using a newly designed 3D CNN architecture for both classification and part segmentation. The representation introduces a conceptual change for processing point clouds by using a global and structured spatial distribution instead of processing each point separately or compromising for discretization. It is based on the Fisher Vectors which are well known for their use in image classification. These vectors are essentially

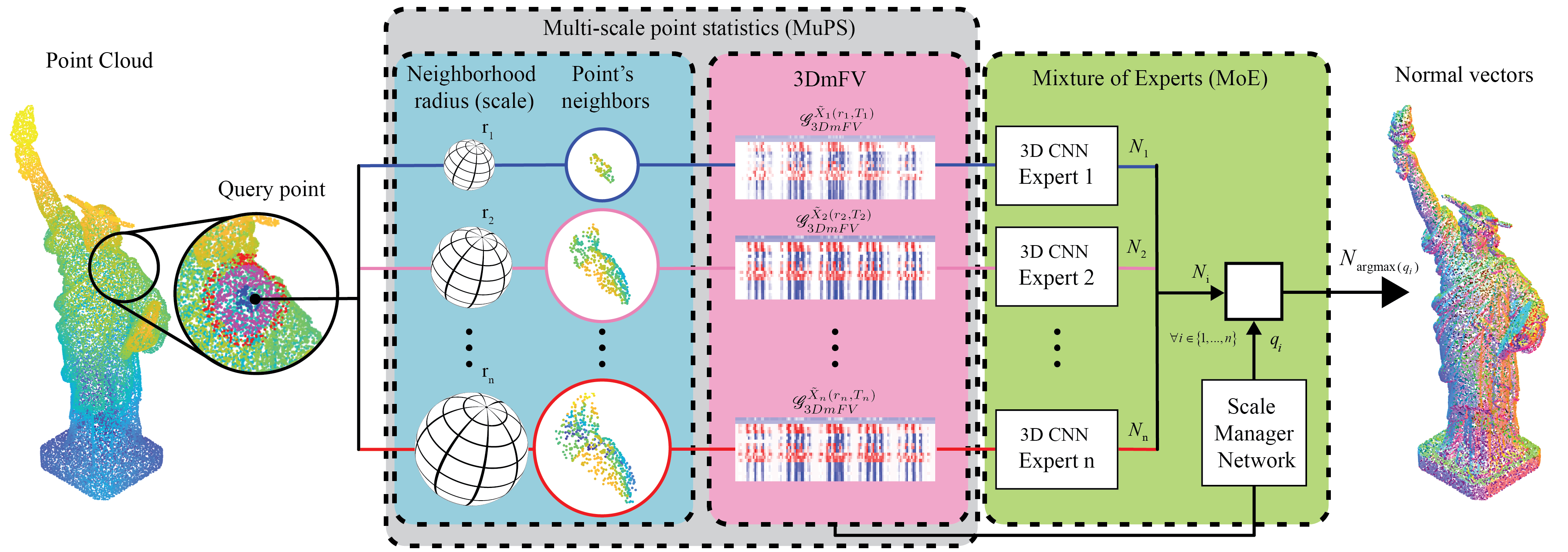

based on finding the gradients in parameter space and are asymptotically optimal for classification. We use the proposed representation to solve a fundamental and practical geometric processing problem of normal estimation using a new 3D CNN (Nesti-Net). To that end, we propose a local multi-scale representation called Multi Scale Point Statistics (MuPS) and show that using structured spatial distributions is also as effective for local multi-scale analysis as for global analysis. We further show that multi-scale data integrates well with a Mixture of Experts (MoE) architecture. The MoE enables the use of semi-supervised scale prediction to determine the appropriate scale for a given local geometry. Additionally, we propose a more traditional, non-learning based, over-segmentation

method for point clouds named Point Cloud Local Variation (PCLV) where we create local clusters of ”super-points” based on geometry, color and distance properties. We show that introducing new modalities improves the oversegmentation performance, however, the optimal modality combination is not trivial. For all methods we achieved state-of-the-art performance without using an end-to-end learning approach, and provide an extensive ablation study and performance analysis.